I just released RelStorage 1.4.0b1. New features:

- More documentation.

- Support for history-free storage on PostgreSQL, MySQL, and Oracle. This reduces the need to pack and makes RelStorage more appropriate for session storage.

- Speed. New tests prompted several optimizations that reduced the effect of network latency in both read and write operations. Memcached support is now integrated in a much better way.

- Support for asynchronous database replication. Previous versions of RelStorage worked with MySQL replication, but did not keep ZODB caches in sync when failing over to a slave that was slightly out of date.

- The Oracle adapter now uses PL/SQL for speed and lock timeouts. Lock timeouts are important for preventing cluster lockup.

- Moved the speed test script into a separate package named zodbshootout, making it easier for developers and administrators to run comparative performance tests.

- The adapter code is more modular, making it easier to support new kinds of databases and database adapter modules.

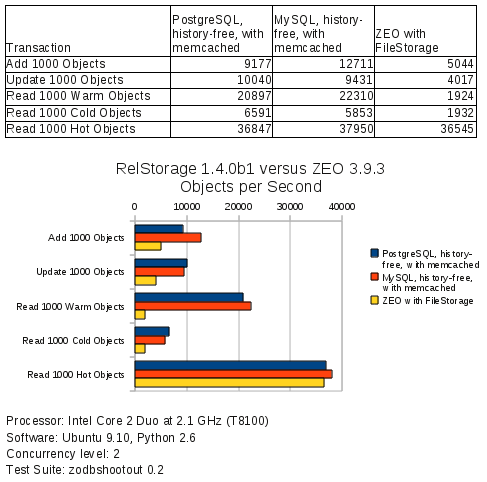

The zodbshootout script tells me this release of RelStorage is faster than ever. It reports objects read or written per second, so unlike the previous charts I’ve made, bigger is now better. Here are the results:

PostgreSQL now beats MySQL in some of the tests. Oracle (not on this chart) is now looking pretty good too.

The new features led to far more automated tests. My private Buildbot, which tests RelStorage with several combinations of Python, ZODB, and operating systems (in virtual private servers), now takes 2 hours to run all the tests. Maybe I need to upgrade that server or investigate the possibility of making Buildbot launch an Amazon EC2 instance.

The previous release was 1.3.0b1, which added ZODB blob support. Several customers asked for new features right after I released 1.3.0b1, so I decided to jump to version 1.4.0b1 rather than finalize the 1.3 series. The 1.2 series has had more extensive testing, so use that for a while if you have troubles with 1.4.0b1.

This new release should be particularly interesting for Plone users, since Plone is always hungry for faster infrastructure.

Cool, thanks for sharing the results, and for making the test code available as a separate package.

Any more info on relstorage + mysql + master/slave replication? We’re interested in setting plone up that way

Hi Shane,

I’m also interested in getting more information about the relstorage + mysql + master/slave replication. Has this configuration been documented anywhere?

thanks,

Nate

Hi Shane,

Subject: RelStorage support to Microsoft SQLServer

I have read that there is a problem to implement this because the “Two phase commit” feature is not exposed by MS-SQL server .

Is there solution to overcome this problem, Without introducing too many layers?

Can we use PyMSSQL and ADODB Python extension to implement the relstorage Adapter for MS-SQL.

Has any one tried this before?

Are there any other major concerns you would have for developing MS-SQL adapter component?

Will you help us in developing the MS-SQL adapter for Relstorage?

Please point us in right direction on the subject.

Thanks

Sachin Tekale